PolyFit: Polygonal Surface Reconstruction from Point Clouds

ICCV 2017

Liangliang Nan, Peter Wonka

Visual Computing Center, KAUST

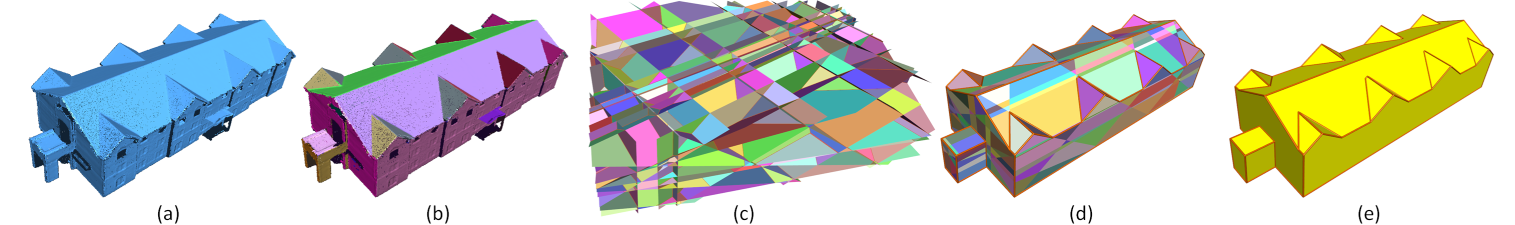

Figure 1: Pipeline. (a) Input point cloud. (b) Planar segments. (c) Candidate faces generated using pairwise intersection. (d) Selected faces. (e) Reconstructed model. Planar segments and faces are randomly colored.

Abstract

We propose a novel framework for reconstructing lightweight polygonal surfaces from point clouds. Unlike traditional methods that focus on either extracting good geometric primitives or obtaining proper arrangements of primitives, the emphasis of this work lies in intersecting the primitives (planes only) and seeking for an appropriate combination of them to obtain a manifold polygonal surface model without boundary. We show that reconstruction from point clouds can be cast as a binary labeling problem. Our method is based on a hypothesizing and selection strategy. We first generate a reasonably large set of face candidates by intersecting the extracted planar primitives. Then an optimal subset of the candidate faces is selected through optimization. Our optimization is based on a binary linear programming formulation under hard constraints that enforce the final polygonal surface model to be manifold and watertight. Experiments on point clouds from various sources demonstrate that our method can generate lightweight polygonal surface models of arbitrary piecewise planar objects. Besides, our method is capable of recovering sharp features and is robust to noise, outliers, and missing data.

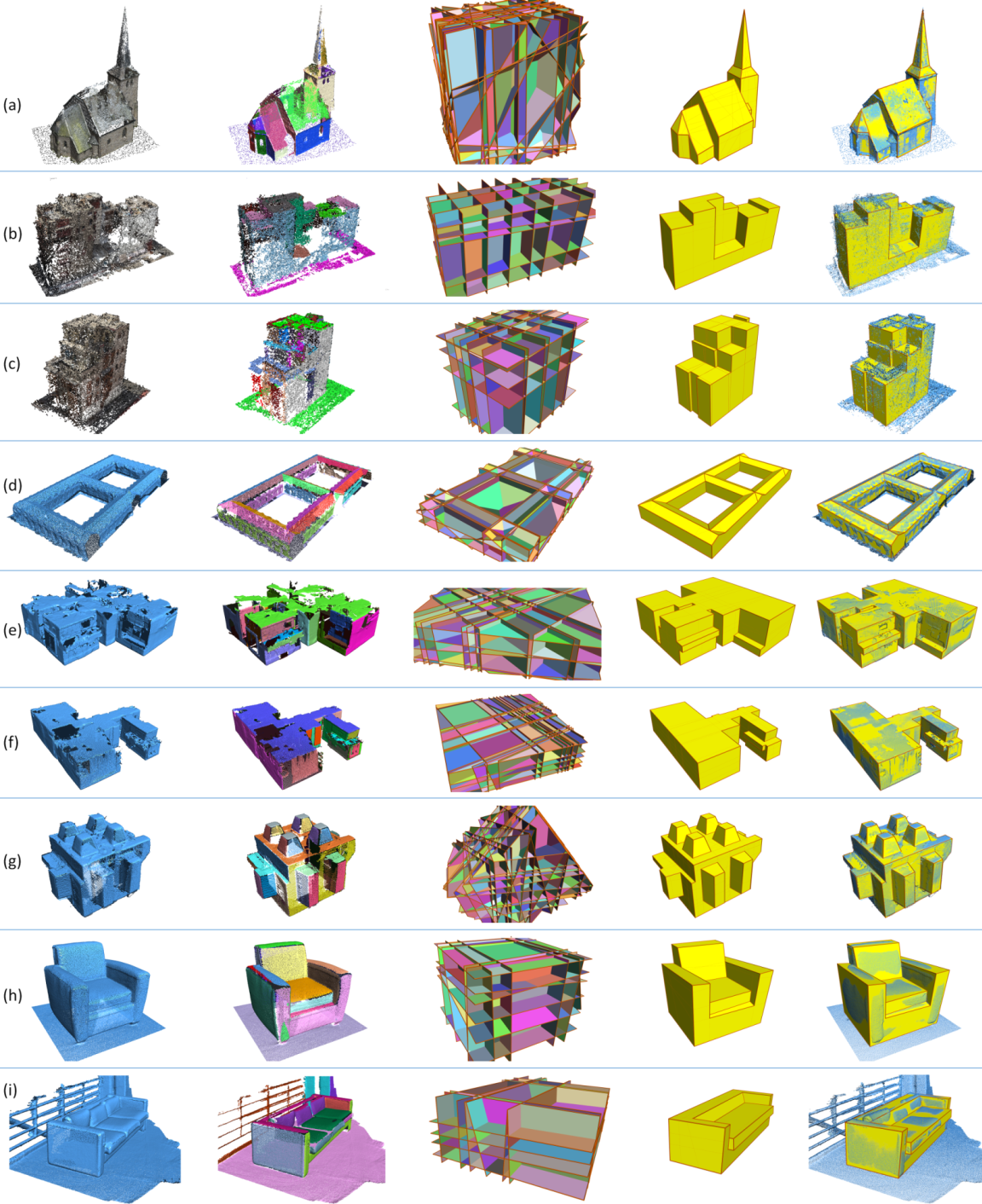

Results

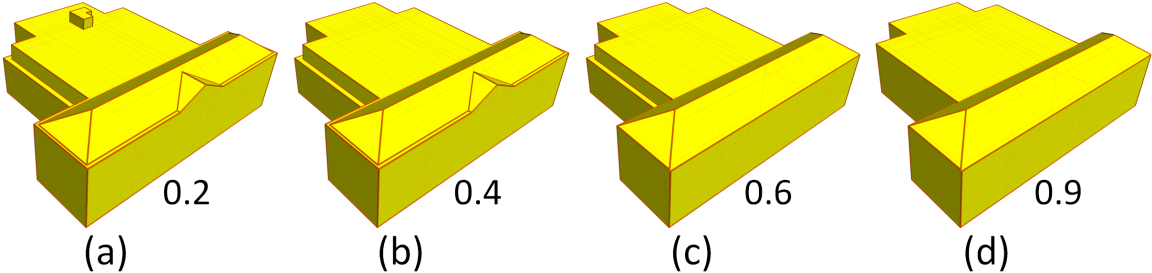

Figure 3: Reconstruction of a building by gradually increasing the influence of the model complexity term. The values under each figure are the weights used in the corresponding optimization.

Figure 4: Comparison with four state-of-the-art methods on a building dataset. (a) Input point cloud. (b) Model reconstructed by the Poisson surface reconstruction algorithm [11]. (c) The result of the 2.5D Dual Contouring approach [27]. (d) The result of [15]. (e) The result of [16]. (f) Our result. The number under each sub-figure indicates the total number of faces in the corresponding model.

Paper [8M.pdf]

Presentation [20M.pptx]

Video [12M.mp4]

Source Code at GitHub

Source Code at CGAL & User Manual

Executable at GitHub

Data & Results [580M.zip]

Acknowledgements

We would like to thank Florent Lafarge, Michael Wimmer, and Pablo Speciale for providing us the data used in Figures 4 (d), (a), and (e)-(f), respectively. We also thank Tina Smith for recording the voice-over for the video. This research was supported by the KAUST Office of Sponsored Research (award No. OCRF-2014-CGR3-62140401) and the Visual Computing Center (VCC) at KAUST.

Citation

@article{nan2017polyfit,

title = {PolyFit: Polygonal Surface Reconstruction from Point Clouds},

author = {Nan, Liangliang and Wonka, Peter},

booktitle = {ICCV},

year = {2017}

}